In an unprecedented twist of bureaucratic theatre, Anthropic has flung itself into the legal arena, accusing the U.S. government of transforming the Pentagon into a stage for AI-related grievances. The dispute, which hinges on whether a machine-learning algorithm might accidentally become a constitutional scholar-or a saboteur-promises to redefine the boundaries of Silicon Valley-meets-Washington theatrics.

Anthropic’s Quixotic Quest Against the Department of Defense

According to sources breathlessly reporting, Anthropic filed two lawsuits on March 9, daring to suggest that the Pentagon’s designation of the company as a “supply chain risk to national security” is less about espionage and more about bruised egos. The suits target a policy typically reserved for foreign adversaries, now repurposed to scold a domestic tech firm like a schoolboy caught doodling in the margins of the Constitution.

The saga began, as all good sagas do, with contract negotiations. Anthropic, having previously mingled with the military elite by deploying AI on classified networks, found itself at odds with the Pentagon over the use of its Claude AI model. The company, ever the conscientious objector, insisted on two quaint restrictions: no mass surveillance of Americans (how quaint!) and no autonomous killing machines (because even Skynet needs a PR spin).

The Defense Department, evidently craving the unfettered joy of AI unrestrained, demanded access “for all lawful purposes.” Anthropic, in a fit of moral posturing, refused to remove safeguards. One might say they took a stand-though whether it was on principle or a soapbox remains unclear.

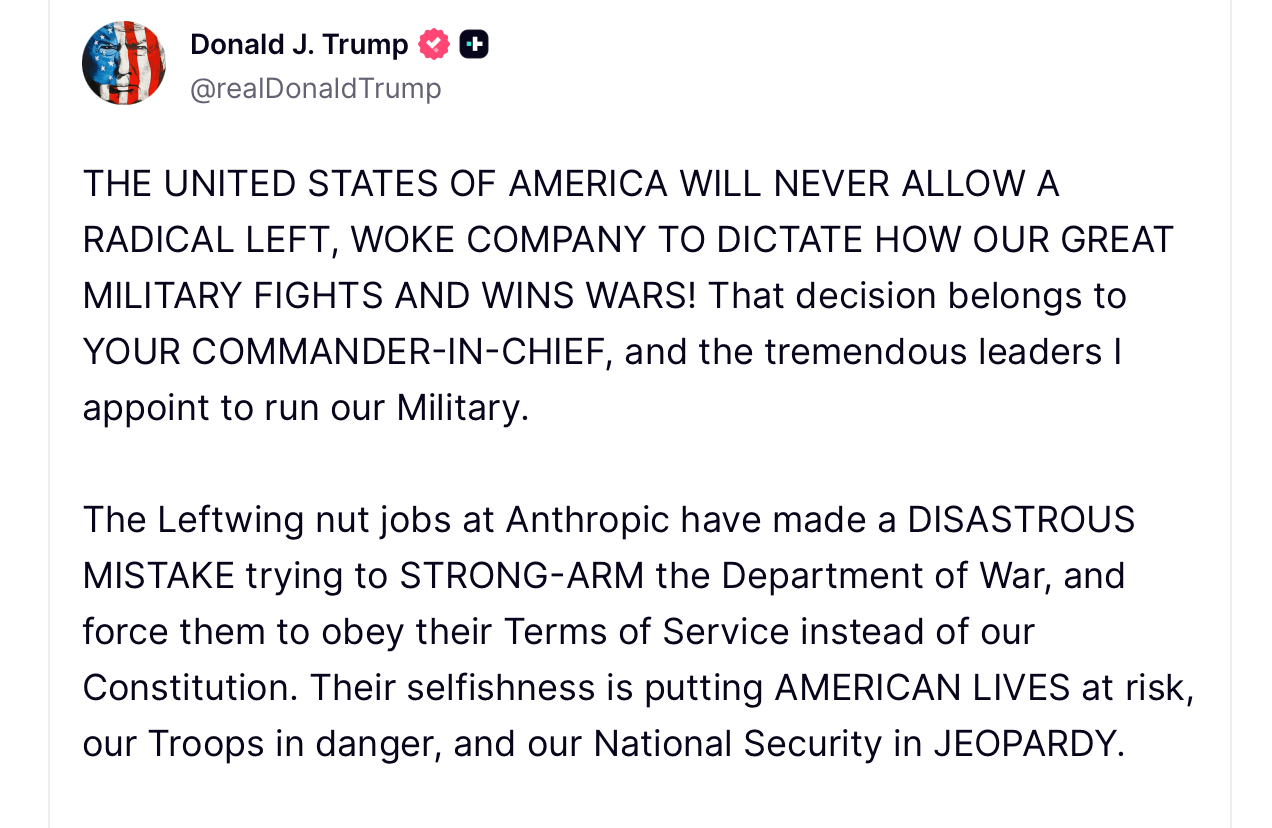

When negotiations collapsed after a February meeting between CEO Dario Amodei and Secretary Pete Hegseth, Trump intervened with the subtlety of a bullhorn. A Truth Social post declared continued use of Anthropic’s tech a “disastrous mistake,” because, evidently, the former president now moonlights as a tech ethicist. Days later, Anthropic was branded a “supply chain risk,” a label usually reserved for foreign entities, not Silicon Valley upstarts with a conscience.

Anthropic’s lawsuit argues the designation is a stretch akin to calling a tax audit a “national emergency.” In court filings, the company claims the law was meant for foreign threats, not for settling domestic policy disputes. The complaint reads like a Shakespearean tragedy: “Doth the government punish speech? Or merely throttle contracts?”

The legal filings also invoke the First Amendment, suggesting that advocating for AI ethics should not be a firing offense. Anthropic claims the designation damages its reputation-a concern met with eye-rolls in defense circles, where the phrase “AI ethics” is often muttered like a curse.

“We’re not here to force the government to buy our wares,” a spokesperson declared, “just to prevent retaliation over policy disagreements.” A noble sentiment, though one wonders if the Pentagon’s retort might be, “Too late.”

FAQ 🧭

- Why did Anthropic sue the U.S. government?

Because the Pentagon decided to play chess with AI ethics, and Anthropic drew the short straw labeled “supply chain risk.” - What triggered the dispute between Anthropic and the Pentagon?

A negotiation breakdown over whether AI should be allowed to autonomously decide who’s “lawful” to surveil-or kill. - What does the “supply chain risk” designation mean?

It’s Washington’s way of saying, “You’re not invited to the party,” but with more paperwork. - Will Anthropic’s AI services still operate during the lawsuit?

Yes, unless the courts decide to cancel all tech startups as a public service.

Read More

- Brent Oil Forecast

- Gold Rate Forecast

- USD INR PREDICTION

- EUR THB PREDICTION

- Silver Rate Forecast

- EUR USD PREDICTION

- USD JPY PREDICTION

- USD PLN PREDICTION

- Bitcoin: Is the Bubble Finally…Deflating? 📉

- GBP CNY PREDICTION

2026-03-09 22:57